[The data was visible. The meaning was wrong.]

By Debleena Majumdar & Arjo Basu

In the summer of 1854, cholera tore through London. Entire neighbourhoods were struck within weeks. Mortality rose sharply. Panic followed. The prevailing explanation was miasma—disease spreading through bad air—and the observable patterns appeared to support that belief.

Yet people kept dying.

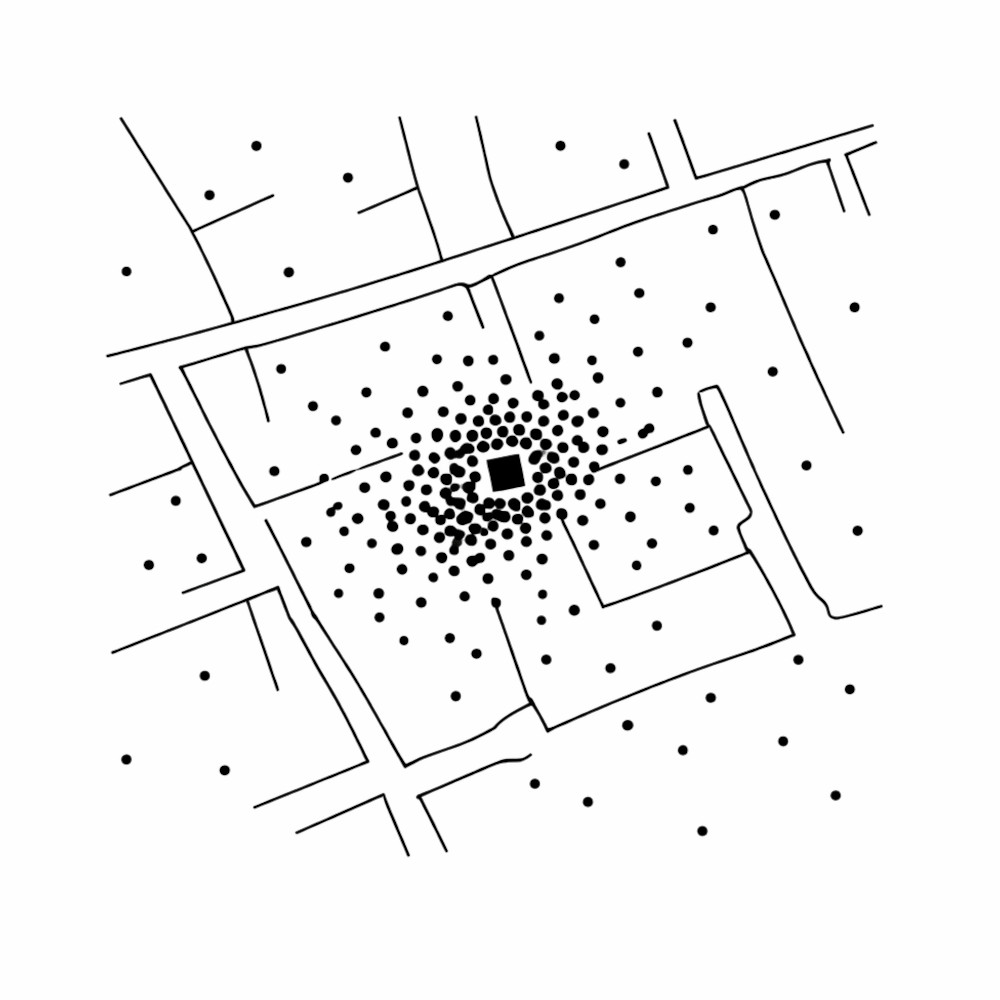

John Snow, a London physician, noticed something the dominant theory could not explain. When he plotted the deaths on a simple map, they clustered around a single public water pump on Broad Street. When the pump handle was removed, infections in that area declined.

Snow did not discover new data. He changed how existing data was interpreted.

Strategy failures today often resemble that moment: the facts are visible, but the meaning attached to them is wrong.

Ontology and Semantics

Ontology concerns what exists in an organisational world—the categories, metrics, and classifications that structure reality. Think customer segments, risk ratings, performance bands, compliance status, strategic priorities. These categories determine what gets measured, reported, funded, and governed.

Semantics concerns what those categories mean in practice and how they translate into action.

Organisations function through an interplay of nouns and verbs. The nouns stabilise reality and enable coordination at scale. The verbs move the system: lend, approve, invest, expand, prescribe, incentivise, withdraw.

Semantics is the bridge between the noun and the verb. It determines what we do because something carries a particular label. When that bridge shifts, behaviour shifts—even if the underlying data remains correct.

When Categories Outlive Their Conditions

The financial crisis of 2008 offers a stark example. Complex securities carried AAA ratings derived from sophisticated models and historical data. Within those assumptions, the ratings were internally coherent. Over time, however, AAA came to imply safety across contexts: regulatory safety, capital efficiency, systemic resilience. That interpretation depended on assumptions about correlation.

The ontology did not change overnight. What changed was the meaning attached to the rating. When correlations broke down during the crisis, the rating categories persisted but their behavioural implications no longer matched reality. Capital continued to flow under an outdated interpretation of risk.

Healthcare provides a parallel. In the 1990s, pain began to be treated as a vital sign. Hospitals routinely recorded patient-reported pain scores, and satisfaction surveys increasingly included questions about pain control. These measures were tracked closely and, in some systems, linked to incentives. The category of pain management remained stable; what shifted was how success was defined. Lower reported pain and higher satisfaction became visible markers of quality. Prescribing behaviour adapted accordingly, and the consequences unfolded over time.

A more contemporary example lies in performance management. Many organisations adopted employee engagement scores as indicators of cultural health and productivity. The category was measurable and consistent. Over time, improvement in survey scores began to stand in for genuine cultural progress, even when underlying incentives and behaviours remained unchanged. Visible movement in metrics became a proxy for deeper organisational health.

In each case, information was not absent. The meaning attached to it had drifted.

AI and the Acceleration of Ontology

Artificial intelligence intensifies this challenge.

AI systems excel at classification. They score, rank, cluster, and predict at scale. Risk probabilities, customer segments, fraud alerts, diagnostic suggestions, churn likelihood—these categories become sharper and more consistent across an organisation. In effect, AI stabilises ontology.

Consider a bank deploying AI-driven credit scoring. The model analyses thousands of variables and produces highly accurate default probabilities. The data is robust, and the model performs well under current conditions. Over time, however, market structures change, borrower behaviour shifts, and economic correlations evolve. The score may remain statistically valid within its trained boundaries, but the action thresholds attached to that score—lending limits, pricing bands, capital allocation—may no longer reflect current realities. If decision rules remain anchored to earlier assumptions, risk accumulates quietly.

As AI becomes embedded in credit decisions, healthcare triage, compliance monitoring, supply chains, and strategic dashboards, organisations gain precision in classification. The vulnerability lies in assuming that better categories automatically produce better decisions. Strategy falters when yesterday’s meanings are applied to today’s conditions.

In an AI-shaped world, truth may reside in the numbers. Advantage will belong to organisations that can update meaning faster than they update data.

The Symptoms of Semantic Drift

Semantic drift rarely announces itself directly. It shows up as organisational friction. Definitions become contested. Exceptions multiply. Workarounds harden into informal policy. Escalations increase because interpretation feels unstable. Authority recentralises in response to ambiguity.

“In an AI-shaped world, truth may reside in the numbers. Advantage will belong to organisations that can update meaning faster than they update data.”

By the time these symptoms are visible at the executive level, adaptability has already weakened.

Snow’s intervention worked because he questioned the interpretive frame, not the data itself. The deaths were visible to everyone. The explanation was not.

What Leadership Looks Like

Leaders can begin by examining the verbs attached to their most consequential nouns. What actions follow when something is labelled low risk, high potential, strategic, or compliant? Under what conditions were those action rules defined? When might they need revision?

Boundary conditions should be explicit. Categories should be stress-tested against shifts in correlation, incentives, or behaviour. Strategy conversations should revisit foundational definitions, not only performance against them.

In practice, this may involve revisiting decision thresholds attached to AI outputs, rotating teams across functions to challenge assumptions, or conducting periodic reviews of whether critical labels still map to the right actions.

Early signs of strain deserve attention: rising definitional disputes, growing reliance on exceptions, increasing requests for escalation. These often signal that the link between signal and action is loosening.

Holding Meaning Loosely

John Snow’s map endures because it shows how transformation often begins — not with new information, but with reinterpretation. The facts were present. The meaning had to change.

In environments saturated with data and increasingly organised by AI, the strategic challenge is maintaining alignment between what exists and what it implies.

For leaders, the discipline is straightforward but demanding: revisit the meaning behind the metrics, re-examine the action rules attached to core categories, and treat semantic drift as a strategic risk, not an operational nuisance.

Strategy fails less from lack of information than from failure to renew meaning.