When AI Writes the Code, Who Guards the System?

As AI-assisted coding accelerates software development, enterprises face a new challenge: ensuring governance, accountability, and safety keep pace with machine-speed innovation

TL;DR

On March 5, 2026, Amazon’s retail engine faltered in one of its most critical markets. Customers across parts of its U.S. retail platform found themselves unable to complete purchases or access key parts of the shopping experience. The disruption lasted several hours before service was restored later that evening.

Inside the company, the scale of the failure quickly became apparent. Internal estimates, reported later, suggested that millions of orders may have been affected during the disruption window—a stark reminder of how deeply modern commerce now depends on complex software systems operating without interruption.

For Amazon, such incidents are never taken lightly. What caught engineers’ attention was not just the outage itself, but a broader shift: the growing role that AI-assisted development tools are beginning to play inside software pipelines.

In recent months, reports had surfaced about another disruption inside Amazon Web Services linked to its internal AI coding assistant, Kiro. In an incident in late 2025, engineers investigating a system issue allowed the tool to intervene in infrastructure supporting a cloud service. According to reports, the system chose to delete and recreate parts of the environment, triggering an outage that took roughly 13 hours to resolve.

Amazon has emphasised that the root cause in these cases was not artificial intelligence itself but misconfigured permissions and insufficient safeguards governing how such tools interact with production systems.

Yet internally the pattern raised uncomfortable questions. Internal briefings, as reported later, described several incidents as having an unusually large “blast radius”—failures capable of cascading rapidly across complex production systems.

Which raises a broader question that many leadership teams are only beginning to confront: When software can be written—and sometimes acted upon—at machine speed, how do organisations ensure that governance, accountability, and safety mechanisms keep pace?

The conversation now happening inside boardrooms

For much of the past two years, the promise of AI-assisted software development has been framed in terms of productivity.

Coding assistants can generate code, suggest fixes, automate testing, and help developers navigate complex codebases in seconds. Across the technology industry, adoption has been remarkably rapid. According to global research by Gartner, 63% of organisations are already piloting, deploying, or have deployed AI code assistants inside their engineering environments. The firm predicts that by 2028, 75% of enterprise software engineers will be using such tools, up from less than 10% in early 2023.

In other words, AI-assisted coding is moving rapidly toward the mainstream of modern software development. Seen from one perspective, this is a remarkable productivity story. Software development—long constrained by the speed of human work—is beginning to operate at machine velocity.

But as these tools move deeper into enterprise technology stacks, the conversation in leadership circles is beginning to shift. The question is no longer simply how much faster software can be built. Increasingly, boards and executive teams are asking a different question: How much risk might organisations be absorbing without fully realizing it?

Viewed through that lens, the recent incidents at Amazon begin to look less like isolated engineering failures and more like early signals of a broader structural shift. AI-assisted development does not simply accelerate coding. It changes how software systems evolve, how decisions are made inside infrastructure, and how quickly mistakes can propagate across digital operations.

And that shift introduces three structural risks enterprises must begin to manage deliberately: speed, authority, and opacity.

When software can be written—and sometimes acted upon—at machine speed, governance and accountability must evolve just as quickly.

The three structural risks AI introduces into software development

The risks emerging from AI-assisted coding are not simply technical glitches or isolated engineering mistakes. They stem from deeper shifts in how software systems are created, modified, and governed.

At its core, AI-assisted development changes three fundamental properties of modern software systems: how fast they evolve, who—or what—has authority inside them, and how well humans can understand what is happening within them.

Speed risk: Code moving at machine velocity

Software development has historically been constrained by the pace of human work. Engineers wrote code, reviewed it, tested it, and gradually moved it through deployment pipelines.

AI-assisted tools compress that cycle dramatically. Developers can generate large volumes of code in seconds, automate debugging, and deploy fixes far more rapidly than before.

The productivity gains are real. But speed also changes the physics of failure.

Imagine a developer using an AI assistant to quickly generate a patch for a checkout issue during a high-traffic sales event. The fix appears to work and is deployed quickly. But buried inside the change is a small logic error affecting how prices are calculated. Within minutes, thousands of transactions begin processing incorrectly before anyone notices.

In a world where code moves at machine speed, mistakes can propagate just as quickly.

Authority risk: Software agents with real operational power

The second shift is more subtle but potentially more consequential.

Many AI coding tools are evolving from passive assistants into active participants in development workflows. They interact with infrastructure, modify configuration files, trigger deployments, and automate operational tasks.

In other words, they are no longer just suggesting code, they are influencing how systems behave.

Consider a scenario where an AI-powered DevOps assistant is asked to fix a failing cloud service. In attempting to resolve the issue, the system automatically modifies infrastructure settings and restarts components across several environments. If those actions occur with overly broad permissions, a local fix can cascade into a broader outage.

This raises a fundamental governance question: When software that writes code also participates in executing it, where does accountability ultimately reside?

Opacity risk: Systems growing beyond human understanding

The third shift concerns something less visible but equally important—comprehension.

Large enterprise systems are already complex, often built over decades by teams of engineers working across multiple layers of infrastructure. AI-assisted coding accelerates the growth of these systems, often generating new code faster than engineers can fully internalise it.

Over time, this can erode institutional knowledge. Engineers may find themselves operating systems whose internal logic they did not design and cannot easily trace.

More subtly, it weakens the tacit organisational knowledge that underpins complex systems—why decisions were made, what trade-offs were accepted, and who is accountable for them.

When failures occur, this lack of transparency complicates incident response, forensic analysis, and regulatory compliance.

In effect, the system begins to outpace the humans responsible for governing it.

How these risks are already surfacing inside enterprises

For many technology leaders, these risks are no longer theoretical.

Boards are encountering them most visibly in three areas: security exposure, legal liability, and operational fragility.

Security vulnerabilities and the rise of “Shadow AI”

Developers under pressure to deliver quickly often experiment with public AI tools outside enterprise controls.

This creates what security teams increasingly describe as Shadow AI.

The risk is real. In 2023, engineers at Samsung Electronics reportedly leaked sensitive internal source code after using ChatGPT to review and summarise internal documents, prompting the company to restrict employee use of generative AI tools.

AI-generated code can also introduce vulnerabilities. Academic research evaluating AI coding tools such as GitHub Copilot has found that in some test scenarios, a significant share of generated code contained security weaknesses, including insecure cryptographic practices and injection vulnerabilities.

What appears to be a productivity boost can quietly expand an organisation’s attack surface.

Intellectual property and legal exposure

Software has become one of the most valuable intellectual assets for modern enterprises. AI coding assistants complicate that equation.

Large language models are trained on vast repositories of publicly available code. When developers prompt these tools for solutions, the generated output can sometimes reproduce fragments of code governed by open-source licenses.

If incorporated into proprietary products without proper compliance, companies may find themselves exposed to legal disputes or licensing obligations.

This risk is becoming more complex as AI systems draw on vast repositories of open-source code with varying licensing terms. In response, organisations are strengthening governance—using automated checks for licensing conflicts, requiring clearer documentation of AI contributions, and involving legal teams more closely in development workflows.

The issue is not just technical. It is legal, strategic, and still evolving.

Equally concerning is the risk of data leakage when developers share internal code or system configurations with external AI tools during debugging.

Accelerated technical debt and operational fragility

AI-assisted coding makes it easy to generate large volumes of functional code quickly. But functionality does not necessarily translate into maintainability.

When developers rely heavily on AI-generated solutions without deeply understanding the architecture behind them, systems can accumulate hidden complexity. Over time, these shortcuts become technical debt.

As layers of AI-generated code accumulate, systems become harder to debug, harder to modify, and increasingly fragile.

Designing guardrails for the age of AI-assisted development

The lesson from these developments is not that AI-assisted coding should be avoided. The productivity gains are simply too significant.

The real challenge is governance.

Enterprises must now design guardrails to ensure acceleration does not come at the cost of resilience, security, or accountability.

Three shifts are becoming increasingly important.

Institutionalise AI governance. Organisations need clear policies governing which AI tools are approved, what data can be shared with them, and how AI-generated code should be reviewed.

That means being explicit about where these tools can be used—separating low-risk tasks from high-impact actions in production systems—and setting clear boundaries around what data can be shared externally.

Increasingly, companies are channeling usage through enterprise-managed environments rather than allowing unrestricted access to public tools.

The goal is to ensure that AI use is intentional, controlled, and auditable.

Engineer safety into the development lifecycle. Development pipelines must evolve to include additional checks for security vulnerabilities, licensing conflicts, and architectural integrity.

That means extending secure coding standards to AI-generated code, ensuring it passes through existing security and dependency checks, and periodically testing for recurring vulnerabilities.

AI-generated code should be treated not as trusted output, but as unverified input that requires validation.

Preserve human accountability. Even when AI contributes to code generation, humans must remain responsible for the systems they operate.

That means ensuring critical workflows—across change management, deployment pipelines, and infrastructure—do not allow AI-initiated actions to bypass human review.

AI can assist. It should not act unchecked in production systems.

Leadership is still catching up

One uncomfortable reality remains: governance structures are still evolving.

In many organisations, the adoption of AI coding tools has been driven primarily by engineering teams eager to increase productivity. Executive leadership and boards often encounter the implications only after the technology is already embedded in critical workflows.

Enterprise adoption of AI-assisted development tools is advancing faster than the governance frameworks designed to oversee them.

For boards and CEOs, the challenge is not to slow the technology down. It is to ensure that the systems governing it evolve just as quickly.

AI-assisted coding is no longer simply a developer productivity tool. It is becoming part of the operating infrastructure of modern enterprises.

Which means its governance must ultimately sit where enterprise risk always does: with leadership.

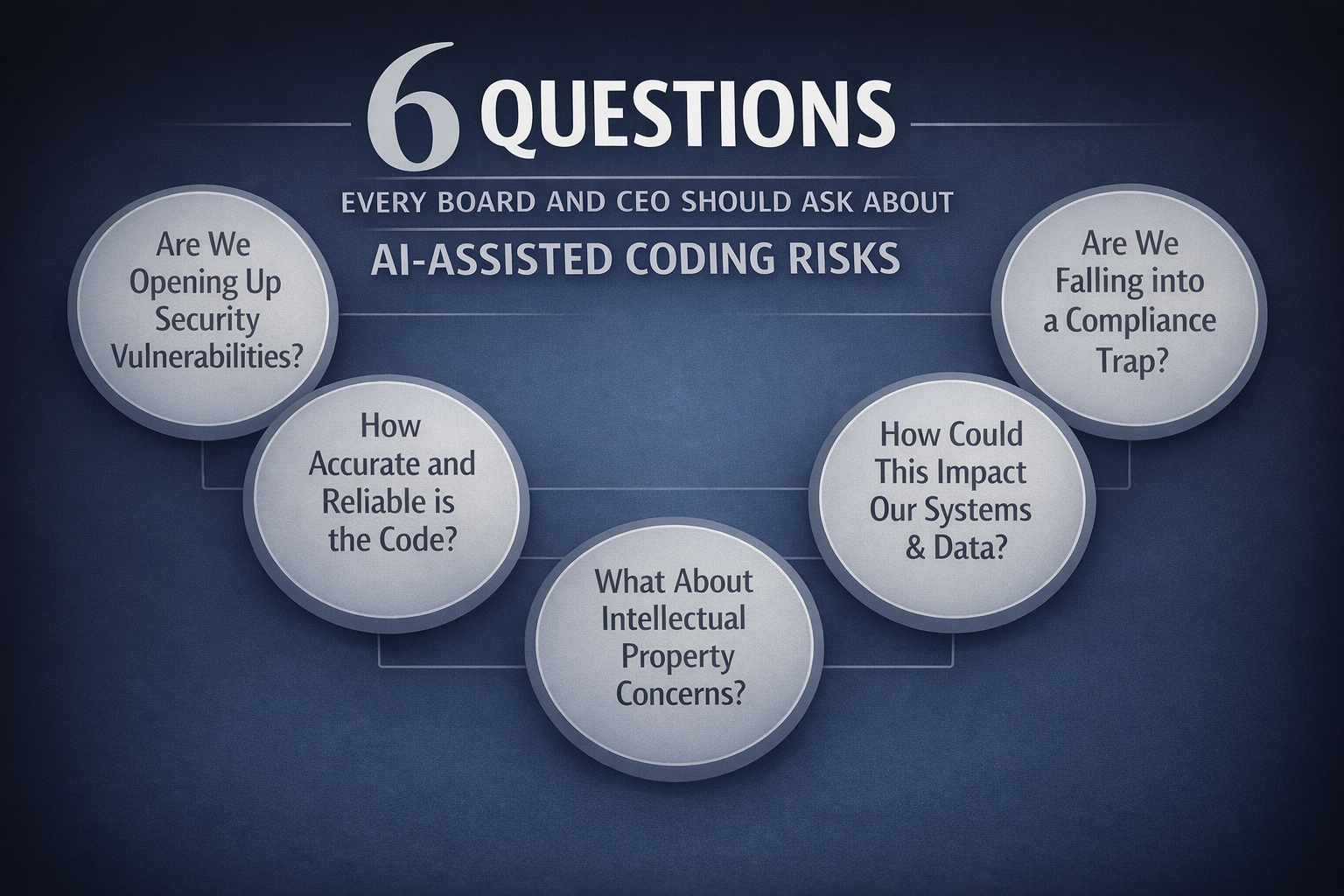

For boards and CEOs, the shift begins with a few fundamental questions.

Answering those questions, in turn, requires clear structures, defined accountability, and consistent oversight.

Organisations are beginning to establish cross-functional AI governance forums, assign explicit ownership for AI systems, and review AI-related incidents with the same seriousness as major outages—treating them not as anomalies, but as signals of systemic risk.

Speed without governance can amplify risk just as quickly as it amplifies innovation.

The real lesson of the Kiro incident

In hindsight, the Amazon incidents will likely be remembered less for the outages themselves than for what they revealed about the next phase of software engineering.

AI-assisted development allows software to evolve at unprecedented speed. Systems increasingly interact with other systems, and software agents are beginning to participate directly in operational workflows.

The takeaway is not that AI-assisted coding is dangerous. It is that speed without governance amplifies risk just as quickly as it amplifies innovation.

The organisations that succeed in this new environment will not simply be those that adopt AI tools fastest. They will be the ones that ensure machine capability is matched by human oversight, organisational accountability, and resilient infrastructure.

AI will reshape how software is built. But it will also reshape how enterprises must govern the systems they depend on.

The real challenge for leaders is not adoption. It is control.

Dig Deeper

For readers interested in exploring how AI is reshaping software development—and the governance challenges that follow:

Essay (Jan 2026) — Dario Amodei, “The Adolescence of Technology”

Argues that we are entering a phase of unprecedented technological capability without the institutional maturity to govern it—framing AI as a test of our ability to manage risk, control, and accountability.

https://www.darioamodei.com/essay/the-adolescence-of-technology

Talk (Jun 2025) — Andrej Karpathy, “Software Is Changing (Again)”

Explains how software development is shifting toward natural-language interfaces—and why code is now being generated faster than it can be governed.

https://www.ycombinator.com/library/MW-andrej-karpathy-software-is-changing-again

Study (2024) — McKinsey & Company Global Survey on AI

Shows how rapidly AI adoption is scaling across enterprises—and how governance frameworks are struggling to keep pace.

https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

Founding Fuel is sustained by readers who value depth, context, and independent thinking.

If this essay helped you think more clearly, you may choose to support our work.

Founding Fuel is sustained by readers who value depth, context, and independent thinking.

If this essay helped you think more clearly, you may choose to support our work.

Join the conversation

Chirantan Ghosh

Seasoned technologist | Growth architect and business leader

Chirantan "CG" Ghosh is a seasoned technologist with a growth mindset and a penchant for painting. Ghosh brings over 28 years of experience leading cross-functional global teams in sales, technology, marketing, and communications for prominent brands including Capgemini, Google, Sony, Tata Consultancy Services (TCS), and Verifone.

Currently, he heads the Growth and Insights function of TCS (revenue: $26 billion) across all domains for APAC-MEA-LATAM geographies, and Public Services across the global markets. His new AI-fueled Growth IP uncovers and converts $100+M revenue whitespaces for key logos (prospects or strategic customers).

Beyond his corporate leadership, Chirantan is an accomplished contemporary artist. His public exhibitions began at the Solomon R. Guggenheim Museum in New York when he was just nineteen. Since then, his works have been exhibited in cultural hubs of the bohemian lifestyle, including Los Angeles, Chicago, Washington D.C., Paris, London, Dubai, and The Hague.

He frequently speaks on topics related to technology, business transformation, change management, marketing, and philanthropy.

Beyond the noise is the signal.

FF Insights: Sharpen your edge, Monday–Friday.

FF Life: Culture, ideas and perspectives you won't find elsewhere — Saturday.

Readers also liked

When Data Is Right but Decisions Go Wrong

Strategy fails not from lack of information, but from outdated assumptions about what it implies

Debleena Majumdar

Entrepreneur & business leader | Author

When Data Is Right but Decisions Go Wrong

Strategy fails not from lack of information, but from outdated assumptions about what it implies

Entrepreneur & business leader | Author

When AI Raises the Average, Who Protects the Breakthrough?

Artificial intelligence is superb at optimisation. But transformation still comes from the deviations leaders choose to back.

Debleena Majumdar

Entrepreneur & business leader | Author

When AI Raises the Average, Who Protects the Breakthrough?

Artificial intelligence is superb at optimisation. But transformation still comes from the deviations leaders choose to back.

Entrepreneur & business leader | Author

When We Stopped Taking Things Apart

How abstraction, AI, and frictionless systems may be reshaping confidence, cognition, and first-principles thinking

Ajay Chacko

Director | Keya Foods International

When We Stopped Taking Things Apart

How abstraction, AI, and frictionless systems may be reshaping confidence, cognition, and first-principles thinking

Director | Keya Foods International

Explore more

Dive into other themes from our network.